This week, we explore the defining characteristics and challenges of designing user interfaces (UI) for virtual reality environments. We caught up with VRNinja developer Alex Rossol to discuss his thoughts on the matter and the VRNinja team’s approach to communicating with players in virtual reality.

Designing UI for virtual reality games presents a unique series of challenges for developers. In traditional games, the player and their in-game avatar are always separated by a screen, even in games that use a first-person point-of-view. This separation allows developers to communicate information to the player that might not be available to the player avatar. For example, let’s look at the case of the N64 collect-a-thon platformerBanjo-Kazooie.

In Banjo-Kazooie, the player avatar, Banjo, has a health bar represented by honeycombs. These honeycombs are only visible to the player; they exist outside the world of the game. The health-bar is for the player’s benefit alone. This is an example of non-diegetic UI and it works just fine for many traditional games. But what happens if your player is IN the game world?

In virtual reality, there is no longer a screen separating the player from their avatar. They are literally in the game; they are their own avatar. Therefore, the use of non-diegetic UI (if it can even be called that anymore) can be problematic and immersion breaking. The challenge then becomes how to design and implement a UI that can give players adequate information without breaking the immersion of the virtual world.

There are a couple of ways to tackle the issue, but we’ll be focusing on the methods used to craft the UI forVRNinja. The developers of VRNinja knew that they wanted to bridge the gap between in-world UI (diegetic UI) and the more traditional overlays (non-diegetic UI). However, in addition to the restrictions placed on them by the game’s virtual reality environment, VRNinja is also a controller-free game, only requiring the use of a VR headset after the game is booted up.

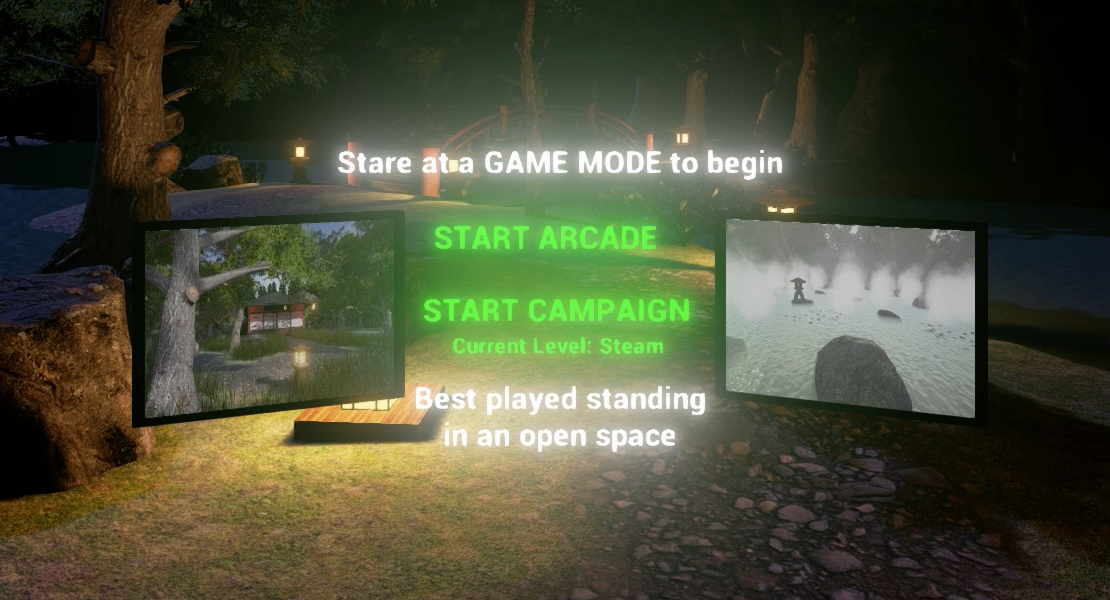

In order to circumvent the use of a controller and still give players the ability to interact with menus, theVRNinja team made use of Unreal Engine 4’s ray tracing functions. By calculating a direct line between where the player is looking and an in-game object in the path of that ray, they were able to define where players were focusing their attention and for how long. This “focus time” detection was used in the level selection menu. To navigate between the game’s levels, players can look at a level, represented by a hovering picture frame, and then teleport to that new location.

Tackling the issue of a health-bar was a touch more difficult. In the current build, after a player is hit by a weapon, there is a star burst effect to indicate the point of impact, followed by the brief appearance of a floating coloured health bar near the bottom of the player’s vision. This health-bar clips to the player’s visual focus, ensuring players won’t miss it when it appears. However, the current solution isn’t very effective for rapid dissemination of information, especially as players become more and more engrossed in the gameplay. Likewise, the “Game Over” and “Level Complete” hover text often go unnoticed in the heat of the moment.

While it’s very rewarding to see players become so immersed in a game that they ignore any UI elements that might break that immersion, the lack of communication from the game to the player is a concern. Moving forward, we are looking to incorporate a more natural “in-universe” solution to many of the current overlay UI elements (the health-bar in particular) in the hopes that players will more readily notice those elements if they are diegetic.

What are your thoughts on diegesis and how it is altered in a virtual reality environment? Have you developed UI for a virtual reality game? Let us know your thoughts in the comments below and be sure to subscribe to ourMADSOFT Games newsletter for more VRNinja news, interviews, and behind-the-scenes development updates!

Have you tried desaturating the colors of the world as the player loses health? That could be a subtle visual cue that things aren't going well for them. You could make the player have increasing tunnel vision as they lose health to make the effect more dramatic.