Part 1: A Survey of Game Network Models

(Skip to Part 2 Below for our choice & implementation)

My name is Dru, and I’m one of the guys working to bring The Maestros to you fine folks. If you’re not in the know, The Maestros is a team-based RTS game that allows you to transform your units on the fly. Recently, we’ve talked about how we produce the game, and how we make environment art, but today we’re going to nerd out on some tech. Specifically, how networked multiplayer works in The Maestros.

Networking in Unreal

As you might guess, Unreal Engine (both 3 & 4) is structured around the core elements needed to build Unreal Tournament (though I’m sure their marketing department would contest me on that one). As a result, it makes a few assumptions in things like it’s default networking model. The Unreal Engine’s default network model is a client-server model that shares many core elements with the The TRIBES Engine Networking Model. This is still the white paper on writing networked multiplayer games, so if you’re making your first multiplayer game, I highly recommend reading it.

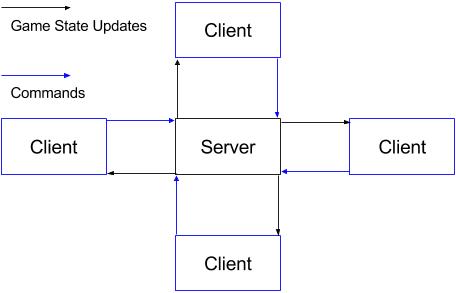

It works like this: players send commands to a central server who makes authoritative decisions on the state of the game and publishes it to the players at frequent intervals. It excels at providing up-to-the-millisecond info on all the players in the world through through high-frequency (>=20/second) messages about everybody status, and some prediction to fill the gaps. The longer you wait between messages, either due to tick-rate or latency, the more likely it is that prediction is wrong. You might have moved left instead of continuing straight in that time, and then the server has to correct your client. This is where “rubber-banding” comes from.

Server Update Rate of 10/s on Maestros - note the hitching/rubber-banding

Server Update Rate of 30/s on Maestros - pretty smooth

This is why you don’t want to play Halo from New York with somebody in Hong Kong, for instance. Somebody is going to have a bad time.

Where the Unreal (or Tribes) model starts to break down is when the number of characters starts to get really large because the amount of data you need to send starts to exceed what a common player’s internet connection can reliably transfer. Because of this, many classic RTS games with hundreds of units like, Starcraft, Age of Empires, Warcraft, Total Annihilation, etc. take a different approach.

Networking in Classic RTS Games

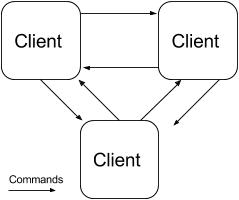

Instead of one authoritative server, these classic RTS games use a peer-to-peer model where every player sends their commands to every other player. In some ways, this is really cool - you don’t need any dedicated servers because the player’s computers make up the game. Unfortunately, player’s computers can vary widely in power and connection speed, and without a central authority, they all need to operate at the same pace. This means the player with the worst latency to any other player, dictates the game’s responsiveness for every other player.

In peer-to-peer, players will also be initiating connections directly to one another, something that home firewalls are specifically built to stop. In this situation, workarounds like port forwarding or NAT punchthrough become necessary. If you’ve ever played Starcraft: Brood War and you couldn’t join a friend’s lobby, so you made your own lobby, had them try to join, and then had that friend host again you were essentially performing a manual NAT punchthrough. These days, Age of Empires II HD uses a proxy to resolve this problem, but that also increases latency.

As you can see in the model above, only commands are ever sent over the wire, no game states. In this peer to peer model, the game state is maintained by simulating the game identically across every machine i.e. it must have determinism. This model for multiplayer games is known as Deterministic Lockstep, and is described beautifully in 1500 Archers on a 28.8: Network Programming in Age of Empires and Beyond (again, this is a little piece of internet gold, highly recommended).

In Deterministic Lockstep, players have to execute every other player’s commands at the same point in the simulation on every machine or else the simulations will start to diverge. This means every client has to wait until they have the commands from every other client in order to execute them - talk about input lag. Fortunately for Real Time Strategy games, issuing a command and then having a unit execute it tens or even a couple hundred milliseconds later doesn’t break the game feel, because it’s an indirect action. You aren’t your marine, and your brain makes sense of the fact that it’ll take a moment for him to react to your order. In Unreal Tournament however, you ARE your character, and it would feel completely broken if you took 200ms to react to you dodging left.

Part 2: How We Built It

Choosing a Network Model

It turns out that determinism is quite an endeavour - on PC you’re dealing with effectively infinite hardware profiles and nondeterministic behavior shows up in the darnedest places - virtual machines for certain languages, differing compilers, it might even come up in floating point numbers. To make things worse as an independent UDK developer, we don’t have access to change the underlying engine code in Unreal 3. All in all, we ran too high a risk of running into non-deterministic behavior that we simply could not overcome.

This left us with the traditional Tribes/Unreal model with high bandwidth requirements. In an RTS with thousands of units moving at once, the per-player bandwidth requirements would have been too restrictive - we wouldn’t have been able to make the game. Fortunately, we wanted The Maestros to be something a little smaller, a lot scrappier, and much, much faster. The update frequency of the Unreal networking architecture would give us excellent responsiveness - one of our core design pillars. With a small enough unit cap, the bandwidth requirements wouldn’t hinder players either, as long as they had modern internet connections.

We also wanted the game to be accessible for new players, and the hassle of configuring routers and/or firewalls wasn’t something we wanted players to deal with. We were also going to need servers with pretty large outbound bandwidth, which many home internet connections wouldn’t be able to support. For these reasons, we settled on a dedicated server model, putting the onus on us to host game servers.

Will It Work?

We didn’t take this decision lightly, though. We started with a few assumptions. One, our users would need a reliable 1 mbps internet connection to play The Maestros. This definitely doesn’t apply to every potential player, but after hearing about others coming to the same conclusions, we felt reassured that it was still worth building. How did we know this was good enough for such a bandwidth-intensive network model? First, we did some naive math. If the x, y, z of both position and velocity are stored as 4-byte floats, sending them 30 times a second gives you: 3 * 2 * 4 * 30 = 720 byte / second / unit. Ok, and we’ve got a megabit per second of data which is 1/8th of a megabyte = (1024 * 1024) / 8 = 131072 bytes. So our theoretical cap for moving units, for all players at a single point time should be 131072 / 720 = ~182 units. Now, this is little more than a gut-check number, given how much more complex things could be, but 150-200 units was just enough for us to make a compelling RTS.

As soon as we got the basics of our game up and running in-engine, we put our math to the test. Unreal performs many improvements on the naive model we described above, so we were able to get 200 units moving on the screen simultaneously with little effort, after aggressively pushing the maximum bandwidth per client upwards. We used the ‘net stat’ command in UDK to monitor our network usage. Here’s a snapshot of the stats with nearly 200 units moving at once.

In a 3v3 match, we put the cap for each player at ~20 units, leaving half again as many for neutral monsters that players would fight around the map.

Client to Server Communication

We expected moving units to be our biggest bandwidth hog, but there is a whole category of issues outside of server -> client position updates that we had to solve. Fortunately, we had a lot more freedom to come up with answers. First question: how do we tell the server to start moving units in the first place?

In Unreal, there are two primary ways to send data between client & server: replicated variables, and Remote Procedure Calls (RPC). In UDK, replicated variables are sent from the server to the clients every so often if they have changed, and they can trigger events when the client receives them (i.e. isFlashlightOn goes from false to true and causes an animation to play). RPCs are just functions that a client can tell the server to execute, or vice versa. A “server” function will always be called on the server, and a “client” function will always be executed on the relevant client.

The First Command Payload

So obviously, our commands need to be sent as server RPCs if we want a client to tell the server to do something - like move their units from one place to another. The next thing to determine was the payload. We wanted to come up with something generic that would encompass any kind of command - attack, move, use an ability, etc. Our first pass was sending a json string over the wire with a location and unit IDs. A move command for a full army might have looked something like this:

{“commandType”:”MOVE”,“unitIds”: [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19,20,21],”location”:{“x”:100.0,”y”:200.0,”z”:300.0}}

Jsons are certainly generic, and we could imagine an attack or ability command having unitIds and targetUnitId, etc. There are ~130 characters in that string so theoretically ~130 bytes in each payload. When tested in-engine, with whatever function data and reliable-transfer data the engine adds, the full call turns out to be ~260 bytes, and we got up to about 8,000 B/s by clicking around as fast as we could. That’s pretty high, but well under a typical player’s outbound bandwidth. It doesn’t help that we’d also to have to send those commands to each player (in most cases), adding up to 48 KB/s to each player’s already-taxed download speed.

A Smaller Command Payload

If you’ve ever made a real-time networked multiplayer game before though, all this probably sounds pretty ridiculous to you. Keep in mind that this was a bunch of college students, trying their hand at multiplayer game programming ;) In reality, that payload is much larger than it needs to be, and the time to serialize and deserialize those strings could really hamper performance. When we put this into practice, more than 2 players in a game tanked server performance to unplayable levels.

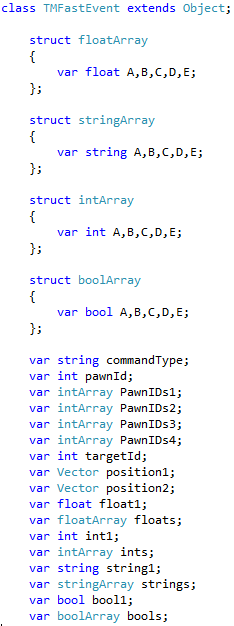

So we went back to the drawing board. Json strings inherently have name information (e.g. “commandType”) that is unnecessary if a strict order for each piece of data was maintained. For example, commandType will always be the first four bytes, unitIds will always be an array after that, etc. Additionally, Unreal Engine wasn’t built to tightly pack strings for network transfer. Floats, vectors, and integers, however, are Unreal Engine’s bread and butter, and they’re much cheaper for it to serialize and deserialize. In came the idea of the “FastEvent” which was just a tiny little object packed with primitive data that could be efficiently serialized, transferred, and deserialized. A movement payload still contains 21 integers, a 3-float vector and a 4 character string (21 * 4 + 3 * 4 + 4 = 100 bytes), but when we give Unreal these raw types, our typical command goes from 260 bytes to 110. Clicking around as hard as we could would barely tip 2,000 B/s in upload speed. Packing and unpacking those primitives into little objects didn’t cause so much as a flutter in the CPU usage either, and our 3v3 games became buttery smooth.

We even had space to fit a bunch more floats, booleans, and vectors used by other commands, and our theoretical max payload still sat pretty at ~160 bytes. Of course, UnrealScript didn’t make this transition terribly simple. You can’t pass objects over RPC functions, and you can’t really pass arrays either. On top of that, there’s a maximum number of arguments, so you can’t very well send all those unit IDs one by one. This left us with strings, primitives, and structs of primitives. We settled on an object that looked like this image below.

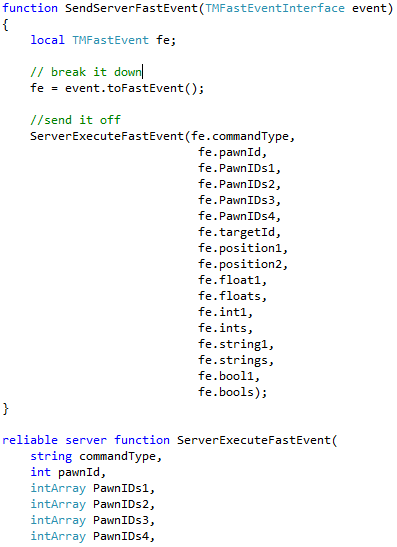

Yep, we had to hand-build arrays as structs. Coding-terror aside, each command had it’s own object that implements a simple interface with one method for translating the specific command into a generic FastEvent. Once it was translated, we would manually unpack each variable into a function argument, so that they could be individually disregarded if they were 0 or null-type and then we would piece it back together on the other side, and translate it back into its specific command type to be processed by an appropriate handler. It looked something like the snippet below.

It’s quite the function call, but it’s performed beautifully, and upload bandwidth hasn’t been a problem since!

As always, technology places certain limitations on game developer, but in The Maestros case, working with small units caps has really allowed us to make a game that looks nothing like anything else out in the genre right now, which has been pretty cool.

Great update! Increasing networking will allow players to micro well and increase the skill curve!