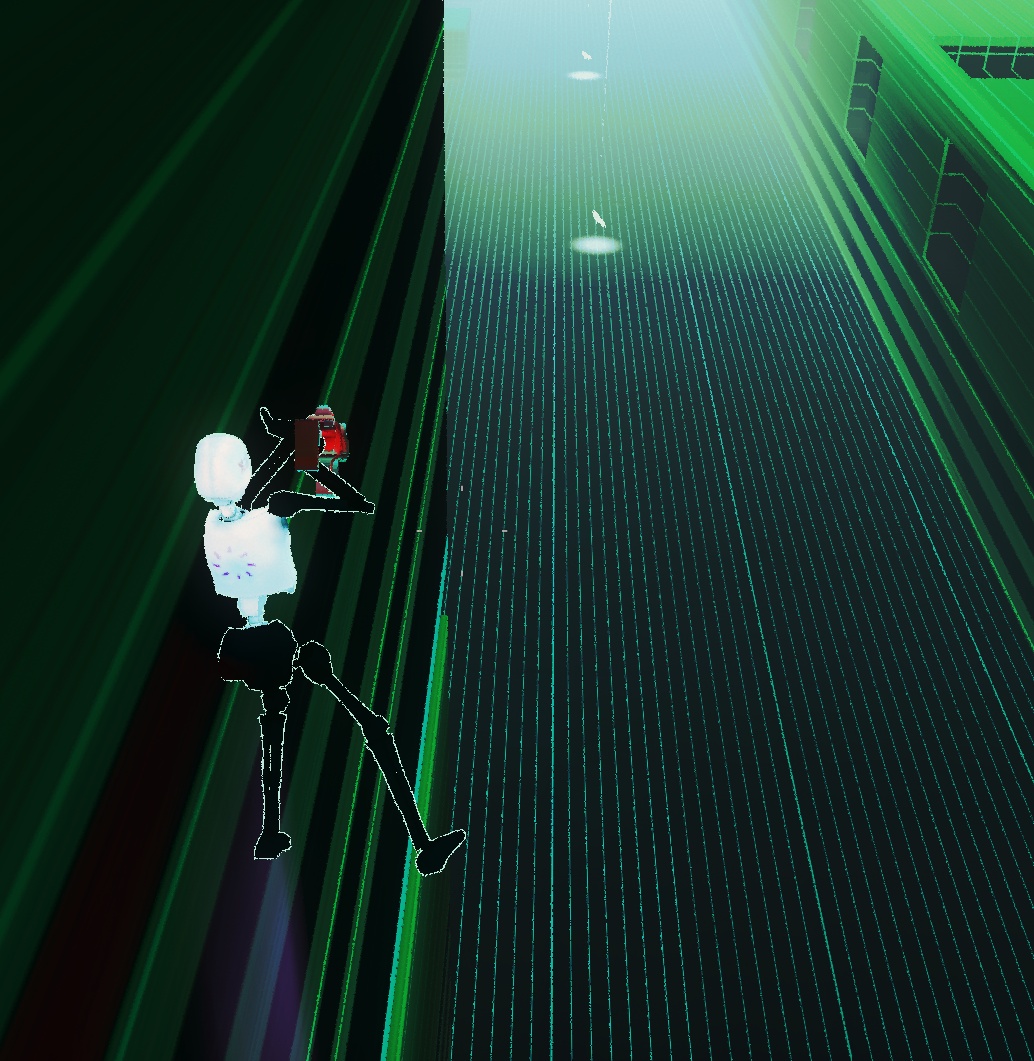

11th Dream is a fast abstract third person shooter. It is based on high difficulties requiring inhuman concentration. It targets fans of hard-core game genres and speedrunners.

![]() 11th Dream on Steam Store Page

11th Dream on Steam Store Page

![]() Ask us anything on our Discord Server!

Ask us anything on our Discord Server!

At the beginning of the match, players choose from three basic and three additional specializations. The resulting mix affects the style of play - speed, damage and endurance of the character. Players compete for blue crystals from destroyed bots. By collecting crystals, specialization increases exponentially.

In addition to synchronous multiplayer and asynchronous Daily mode, the game offers a story campaign speculating about the future, in which artificial intelligence overcomes human history.

To bring vapor of dream gaming to your screen, we are going through rather bizarre development flow using our own custom engine. Absence of accessible technology supports our imagination, breaking visuals and also drives us to create new artistic approaches to fast 3D shooter genre.

Key Features

- Single-Player Story Campaign

- Multiplayer

- Fast Paced Hardcore Game-play

- Arena Skirmish Maps

- Daily challenge and scoreboards

- In-Game Level Editor

“You should add photosensitivity warning!”

-J. Doe

0X0010 IN HISTORY

0X0020 BEFORE BOTS

0X0030 BIOLOGICAL MIND

0X0040 CREATED TOOLS FOR LACK

0X0050 OF POWERS AND FATIGUE

0X0060 TOOLS AGAINST UNCOMFORT OF WORK

0X0010 AFTER BIOLOGICAL HISTORY

0X0020 WHEN BOTS BECAME

0X0030 TOOLS FOR OTHER TOOLS

0X0040 WITH NO WORK

0X0050 BUT UNCOMFORTABLE.

0X0060 THE SYSTEM STARTED TO FAINT

Why custom physics?

11th Dream simulation runs on our limited collection of physics related functions. Everyone with a game-dev experience would ask, why did we decide to skip existing third-party physics engines and rely on our own.

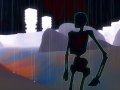

When the development started, all the movement and collision systems were rather simple. Static objects were using axis-aligned boxes for their collision geometry and characters were represented as 3D points. Terrain was a simple bitmap of heights. Projectiles were moving slowly enough to represent them as points, too. For these primitives, importing tens of thousands of lines of code seemed unreasonable.

Box vs. point is no rocket science.

As we progressed with prototyping, we needed to develop some collision queries for non-player characters (NPC). To confirm that NPC is in line-of-sight, raycasts were introduced. Later on, we realized that making collision boxes by hand slows us down and that it is a tedious job. It felt natural to add triangle mesh as a new type of collider to speed up map creation. Character representation was changed to a bounding box.

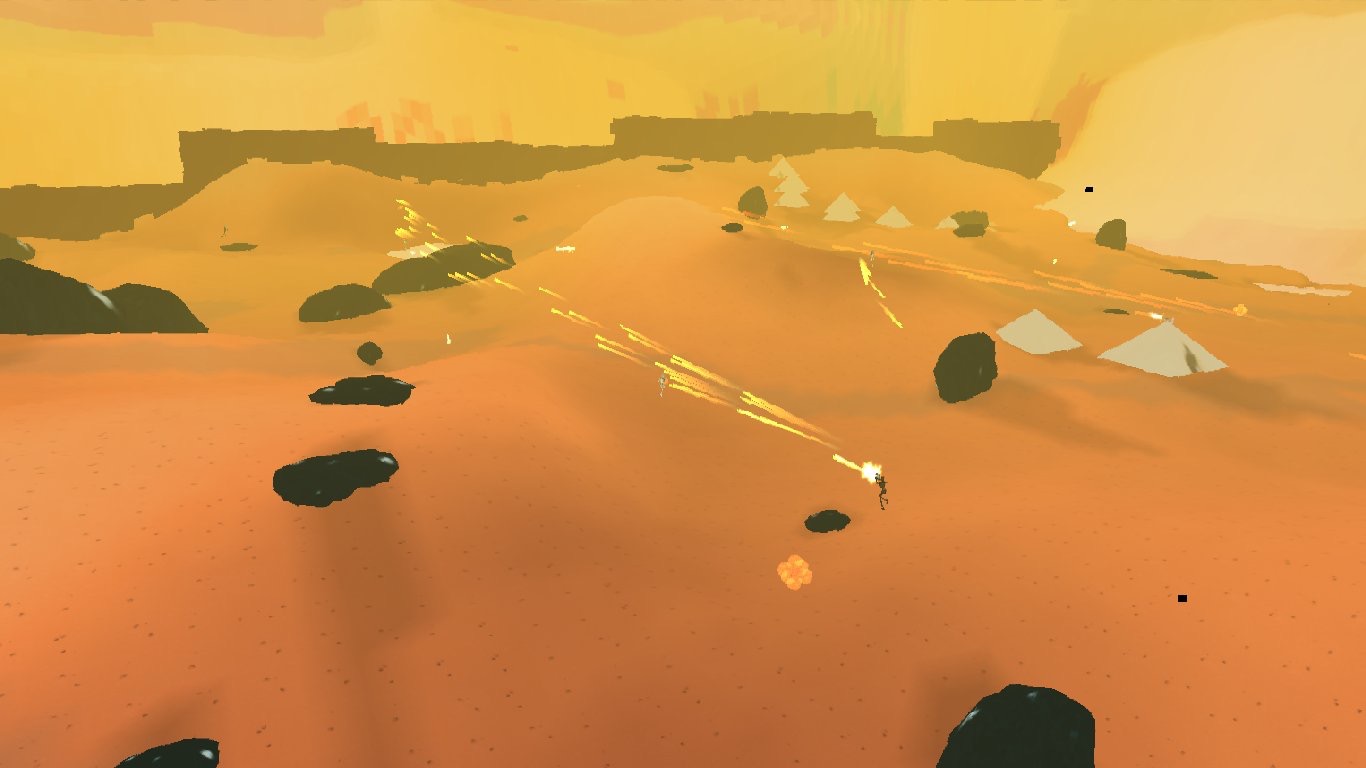

Screenshot from early development version of 11th Dream. Imagine points, boxes and raycasts and you get an almost perfect picture of the simulation at that time.

The point is probably clear. When we started, the physics scope was too small to use a third party engine. For today’s state, we would choose it right away, but our code (which has grown slowly over time) is already in place.

Algorithm

It is quite easy to find collision algorithms online, but much harder to discover their use in character motion. Therefore, we do not describe here the details how to compute tri-vs-box collision or build a collision hierarchy (but we provide some links below this article).

Our character is represented by an axis-aligned bounding box. When the simulation ticks, the character tries to move to a new position. We perform collision detection in two steps.

Resolve support

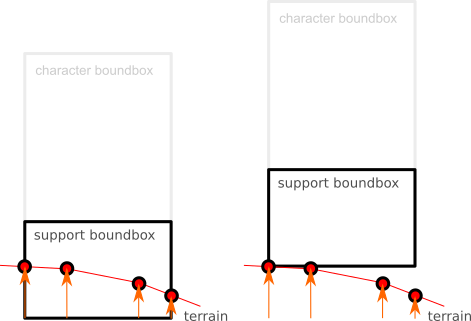

Legs of in-game character are bounded by an additional box which is used to determine whether the character is grounded (supported). Boxcast is performed and the topmost contact point sets the new elevation. Note that this affects only y-position of the character to prevent unwanted sliding on slightest slopes!

After the character is moved, we perform a second boxcast, this time with full character box, to check whether we ended up in free space. If this is true, the algorithm ends here. Otherwise we need to continue to next step.

Other collision detection

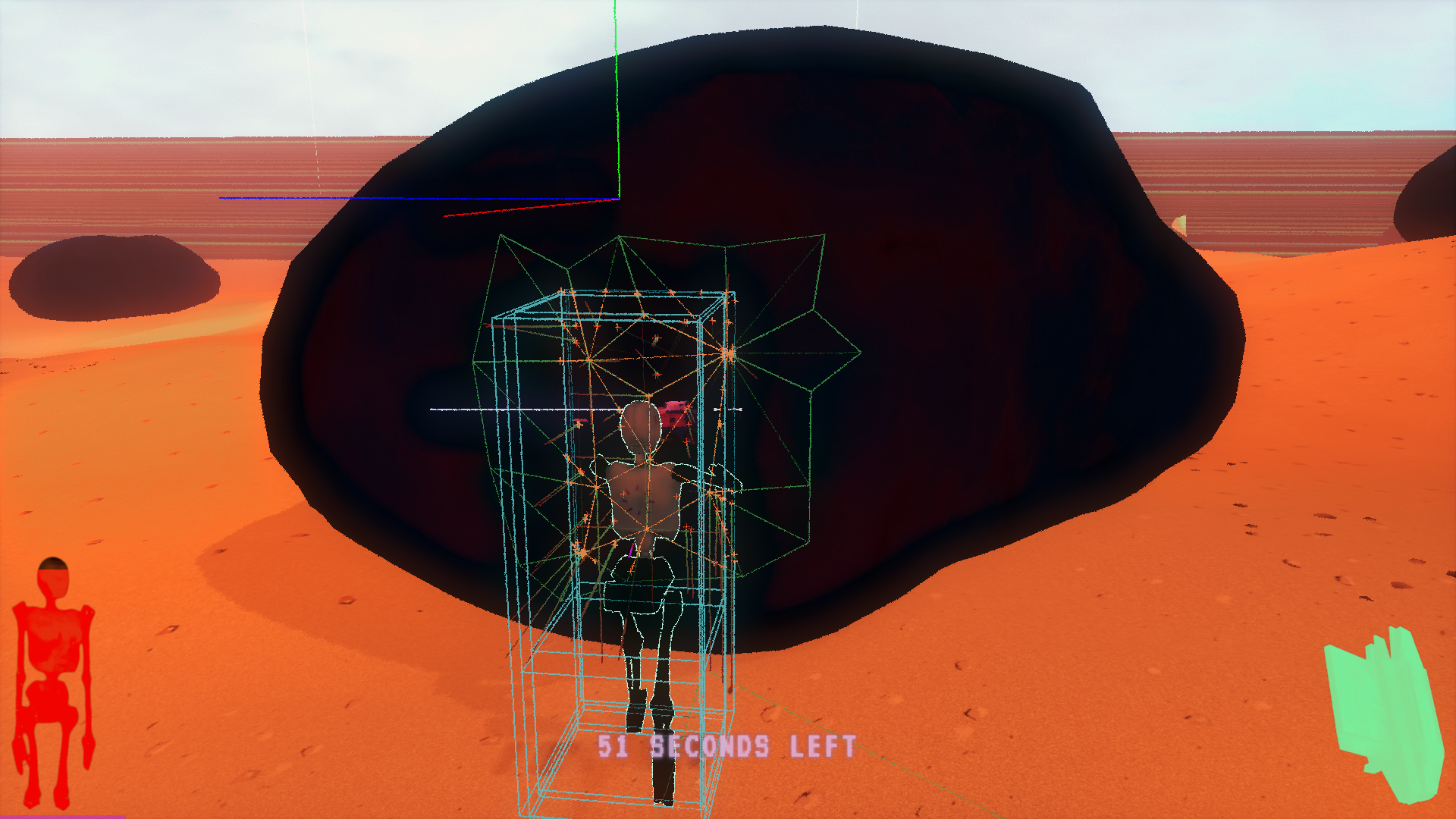

Now the character is in trouble, so we make use of the box cast. Each collected contact point contains position, resolution normal and penetration depth. The position describes where the contact happened, the resolution normal tells us which direction to move away from collision and penetration depth tells us how far.

However, we cannot simply pick first contact point, move the character and pretend that we are done. Some of reported contact points can be still inside of the character’s box! This is exactly the mistake we did in the previous version. We must pick a contact point such as:

- penetration depth is minimal,

- all the other contact points are moved out after correction.

Afterwards, we repeat the algorithm and resolve support once more. In some cases it is possible that no solution is found even after few iterations and then the character must be moved to last recorded safe position, otherwise it can end up inside something.

Colliding triangles when pushing against boulder.

Usage in vertical sliding

Being able to slide up when pushing against the obstacles, that is something we introduced by mistake. But it felt so good! Later we discovered that it was (of course) the support resolution which caused this funny mechanic. With today’s update, we reforged it from a bug to consistent behavior.

The implementation was in the end very simple: Is the character touching anything? Yes? Good, you can slide up!

Sliding up!

Conclusion

We think it is important to share the knowledge and discuss it – either someone may learn from our successes and mistakes, or quite oppositely they can point out imperfections we missed. We will happily answer your questions.

Big thanks goes to Daniel Ilha for reviewing this blog post. This article was originally posted on our blog we gladly share it here with you. The update of collision system is now live on Steam!

Links

- Ericson, Christer. Real-Time Collision Detection. 2004. ISBN 978-1558607323.

- Collection of collision detection algorithms at the finest level.

- Gabor Szauer. Game Physics Cookbook. 2017. ASIN B01MDLX5PH.

- Collection of collision detection algorithms at the finest level.

- kdrnic. Triangle-Box SAT test in Javascript. Github.com

- Sample implementation of tri-box SAT test in Javascript. Different language than we use and that’s sometimes better, it forces to think about the problem, not just copy blocks of code.

- Wikipedia. Bounding Volume Hierarchy. En.wikipedia.org

- BVH is one of the options how to speed up collision queries. 11th Dream uses BVH for organizing static objects.

Artificial Intelligence in 11th Dream

FeatureHow does the artificial intelligence work in 11th Dream? Let's look behind the curtains!

Weather Effects in 11th Dream

News 1 commentIn 11th Dream, weather plays an important role in nostalgic game atmosphere. We would like to share with the core ideas behind our implementation of weather...

Noise Map and Updated Jump

News11th Dream has received a new skirmish map - Noise. We've also tweaked jumping of characters to make the take-off smoother.

New skirmish arenas in 11th Dream

NewsThis week we are adding two new skirmish arenas to 11th Dream - Desert and Hills.

Interesting style!

Thank you!